In the past I’ve written and presented on OBI performance from the ‘before perspective’:before you begin development, what are the things you should plan on and how should you implement them. This paper however is with the ‘after perspective’; what to do if you are stuck with a performance problem that you need to solve quickly. It will use the Financial Analytics (All versions) application from Oracle’s BI Applications (aka the BI Apps) to walk through a plan of attack and demonstrate specific changes using the Oracle Database 10x. Thus, this paper has two purposes:

- Specifically to document actual changes for Financial Analytics

- Generally to walk through a performance tuning effort that you yourself may undertake

Note: You do not need to be working on Financial Analytics or even the BI Apps for the concepts in this article to apply. It merely uses Financial Analytics as its example, and where appropriate I will explain the model.

I’m going to do something a bit different with this article in that I will tell the story of a recent performance tuning project for a client. A previous integrator had delivered Financial Analytics and it was up and running in a production environment, but the performance was terrible. Many queries were over 8 minutes. We were asked to tune the GL Transactions star, but the lessons learned here will work for all modules of Financial Analytics, regardless of version. In fact, implementing them for only one star actually boosted the performance of the other Financial Analytics Subject Areas.

I hope to impart the many aspects of performance tuning to highlight areas which you should also look at when needed. As usual, I will focus on general concepts and key takeaways. Additionally, some knowledge of GL structure and Financials will be useful but not mandatory. Shown below are the basics of a Financial Analytics Star. Of course there are many more dimensions, but I removed many of them so we can focus on the real heart of the solution.

Assessment

After a quick review of the customer’s BI Apps implementation, what they had done, how bad the performance was and their key pain points, it was time to get our hands around the problem and open up the hood. Myself and a small team gathered a large list of about 40 reports that were not performing up to the report author’s expectations. We made sure we had good coverage from a variety of key ad-hoc power user/report developers – focusing onbreadth was more important than depth. It turns out that all of the reports in question were developed by these power users; none were Out-of-Box (OOB) reports or modified versions of them.

Next, I did a review of each report and cataloged it. We ran the reports, looked at their logic and how they were built, and tried to determine a few things about each one:

- Was there heavy logic in the report?

- Did it have unions?

- Was it filtered properly or at all (e.g., did it have a time filter)?

- Did it do anything unusual?

- Was it limited by not having a good field to use?

SQL Catalog

Next, I needed to gain some detailed information about what they were doing and how they were doing it. Once I had done this, then I could look for patterns and ultimately recommend an aggregation strategy (more on that later). To do this I cataloged each one using their generated SQL from the OBI Query log. The result of this helped me to understand the following:

- Tables used

- Joins

- Which tables had filters and selects

- Based on the above, determine each report’s granularity

- Baseline each report’s performance to measure improvement.

Understanding the grain was the ultimate goal of this exercise – it will become useful for identifying aggregate candidates later on. Note I was not interested in the metric calculations or other report logic – this is more about tables than fields. Complex logic will be dealt with later after the bases are covered.

Baselining

We had to demonstrate gains and understand if the changes were helping or not, so baselining performance was important as well. For consistent baselining, we broke each report out into its own separated dashboard and had only 1 report per page so that its execution could be isolated and results more consistent. Make sure to run each report multiple times and take an average. I also recommend to clear out Oracle Buffer Cache (and result cache if you use that in 11g (which you should!)) between each execution to as not to taint the results:

alter system flush shared_pool; alter system flush buffer_cache; BEGIN dbms_result_cache.bypass(FALSE); dbms_result_cache.flush; END; /

Note also we ran these reports as both Administrator and as a user account to see the effects of Data Security on the reports.

Key Take-Aways:

1) Isolate and capture accurate performance numbers right away

2) Use the SQL to gain a big-picture understanding of what is happening. Focus on Breadth.

Report Layer

Normally, BI performance is all about the data model and database features. You’ll look at partitioning, star transformations, bitmapped indexes, etc. to improve performance, and we’ll get to that in a short bit. But that assumes that your reports are built correctly and that your data model is used properly within the BI tool. It’s a waste of time to tune a model if you are looking at the wrong queries.

I had noticed that some of the queries were returning thousands of rows from the database, but the reports only showed a few dozen rows, fewer if a pivoted report. This happens when the grain of the views (charts, Pivots, etc) is different than the grain of the Select portion of the criteria tab. Generally speaking, anything that is not a metric will appear as a group by in the SQL. If there are extra columns in the group by list, the resulting grain will be lower than what is actually needed.

It was clear that we had to do a report grain clean-up pass. Essentially, this amounted to removing things from the criteria tab that were not needed in the output. Usually these columns get there as a developer is building the report, they put a filter on those columns and never remove them. To demonstrate the problem:

GL Account Example:

The GL contains a series of complex account codes which are actually glued together segments in the form Seg1-Seg2-Seg3-Seg4-Seg5… . The number of segments is different for each customer; my customer was only using 3. Each of these segments has a large hierarchy associated with it, with many codes and descriptions.

Consider a report were we show dollars by Segment 1’s hierarchy. If there are 10 Segment1 values, 20 Segment 2 values, and 15 Segment3 values, we expect only 10 records to come back. But if the developer left Seg2 and Seg3 on the select list, we can expect about 10x20x15 records to return (3,000 rows). We’ll have less if Seg2 and Seg3 were filtered on in a prompt, but the point still remains. 3,000 rows will take much longer to process inside of the database than 10 will, will take longer over the network, and OBI will then still have to aggregate them further to get to the final 10.

Thus, we compared the selected grain with displayed grain and made some deletions which resulted in a very nice performance gain. Plus, we could now worry about analyzing and tuning proper queries instead of bogus ones. Don’t forget to do a proper regression test of the reports to ensure you haven’t broken anything!

Key Take-Aways:

1) Report construction matters too – start with it as it affects the entire stack.

The Data Model

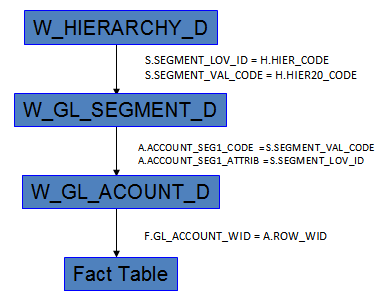

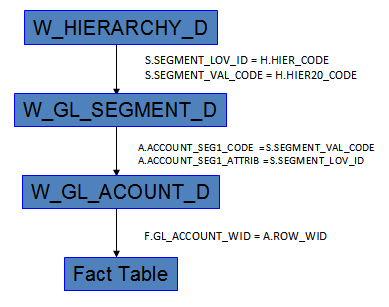

Armed with proper SQL, I then drew the picture of what was happening in order to see the Data Model. Financial Analytics is designed as a multi-level snowflake schema instead of a clean star. When you look further at the joins between the segment tables, you’ll notice that they happen to be the same fields:

In the diagram above, W_HIERARCHY_D could just as easily been joined to W_GL_ACCOUNT_D as W_SEGMENT_D as they join on the same fields. W_SEGMENT_D actually provides no value to these queries and in fact just gets in the way! But why stop there? The W_GL_ACCOUNT_D table holds the linkages that quite frankly should be in the fact table. Since W_GL_ACCOUNT_D is usually a relatively large table as compared to the segment and hierarchy tables, the database optimizer will have to process a 3 step linkage to use W_HIERARCHY_D and the Facts together. This causes problems for two reasons:

- Its extra join work by the database that is not needed

- It is more complex for the Oracle database to handle

The result of both of these problems is poor or ineffective use of Star Transformations. Star Transforms are a powerful feature that Oracle has to process a typical DW type query on a Star Schema. If Oracle is able to properly deploy a Star Transform, it can lend to significant performance gains. More on Star Transformations in the next section.

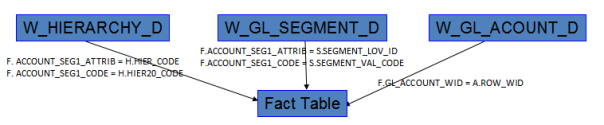

The solution ultimately breaks up this snowflake model and forces a clean star model:

This of course means that the Fact tables need to be extended with the necessary fields for each segment used. Some of the OOB tables already have these FKs added to them, especially the aggregates. In my customer’s case, I needed 3 segments total, and each join was a 2 part composite FK consisting of ACCOUNT_SEGn_ATTRIB and ACCOUNT_SEGn_CODE. These fields are easily obtainable in the ETL during the lookup to W_GL_ACCOUNT_D that gets the GL_ACCOUNT_WID for the Fact table.

A bit about Star Transformations

Star Transformations are a highly optimized feature of the Oracle Database since version 8. They are the best way to handle a selective query that hits a very large fact table. In the case of Financial Analytics, many of the queries are in fact highly selective – they filter on segment hierarchies, codes, Account numbers, Ledgers, Orgs, Time and some others as well. I suggest you read a very popular document by Jeff Maresh about Star Transformations called “Supercharging Star Transformations”.

I do want to discuss when and why star transforms are good and when they are not. Recently I’ve been on a different project where we tried Star Transforms, but they turned out to be slower than a more traditional approach, so don’t assume that they will always be suitable for you.

Star Transforms are best when:

- You have a clean star model and not a snowflake or bridge table

- Your fact table is very large (when you need them most!)

- There are several dimensions that are commonly used together to access it

- You are ultimately looking for a small piece of the fact table

In other words, if you have a clean model with highly selective queries identified by many dimensions, then Star transformations are for you. Selecting all of the records for a given month and adding them up is an example of where a star will not work well. With that in mind, Star Transforms may not you’re your more summarized reports, but should provide a better benefit for more detailed ones. Luckily, Financial Analytics suits these requirements very nicely.

Key Takeaway:

1) Star Transforms are best for highly selective queries which use many pieces of information to select a small subset of a much larger table.

RPD Modeling

Merely drawing a picture of how you’d like the joins to work does not make it work that way of course. To change the joins you will have to open up the RPD and begin some remodeling work. I will overview the basics of what needs to be done in order to implement this change. This section is very specific to Financial Analytics, so if you’re not interested in it or the RPD in general then skip ahead.

We want to do two main things: 1) remodel things that are in our scope – Fact tables and Segments, and 2) leave everything else alone so that it still works properly. #2 on that list will cause us to do some things that may not be needed if we were only doing #1 – this is the BI Apps after all and we have to play nice with other pieces. We also want to do only the work that is needed, so if you are only using 4 segments then ignore the other 6 that are unused. Since much of our work is on conformed dimensions, there will be some cases where these changes are only partially implemented.

With that in mind, we want to have the following 4 types of join paths:

- For in-scope fact tables and used segments: This is the optimal path.

- For in-scope fact tables and not-used segments: (Same path as OOB)

- For out-of-scope fact tables and used segments: (Slightly altered OOB model – still uses GL Account though)

- For out-of-scope fact tables and not-used segments: (OOB Join path)

The reality is that in order to support all of the above, the W_GL_ACCOUNT_D table will need to be aliased to support two different join paths – New and OOB.

| Fact Table | Segment/Hier | Join Path |

| In Scope | Used |

|

| In Scope | Not-used |

|

| Out of Scope | Used |

|

| Out of Scope | Not-used |

|

The main steps for the RPD work are as follows:

- Split up the W_GL_ACCOUNT_D to support the two join paths:

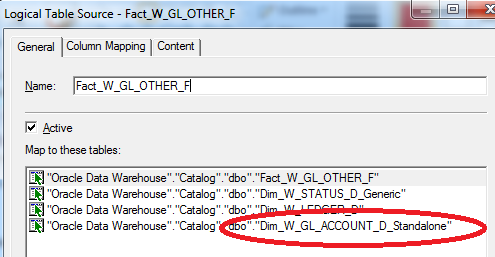

- Make another Alias for W_GL_Account_D and signify it as “standalone” or “Star”. Remove joins to any of the in-scope segments and hierarchies.

- Add it to the Dim GL Account Logical Table as a new LTS :

- Replace it in any in-scope Fact LTS. In many of these LTSs the W_GL_ACCOUNT_D table is included in the source for calculations and also for reaching to the W_SEGMENT_D table. The Replace Table wizard will help you here. You should end up with:

- Extend the Fact table with the SegN_Code and SegN_Attrib column pairs and join straight to W_HIERARCHY_D from the in scope fact tables.

- The final step is important to ensure proper usage in an ad-hoc environment and for other reports. It will ensure that one can use W_GL_SEGMENT_D directly without having to filter on W_HIERARCHY_D first, which a user may not always do. Break apart the logical Segment tables so that W_GL_SEGMENT_D and W_HIERARCHY_D are their own standalone logical tables:

- Make a segment logical table with only the W_GL_SEGMENT_D table in it. Use the same LTS filter from the original.

- Clean out the OOB GL Segment tables by removing the W_GL_SEGMENT_D table and switching the LTS filter to use HIER_CODE. Rename these tables to indicate Hierarchy as opposed to Segment.

- Link in these new segment-only tables to the in scope fact tables (Physical, Business Model and especially the Content Tab). Note the content tab & Business Model joins will have to be done for all other stars as well that use GL Hierarchies as GL Segment no longer lives inside the logical hierarchy table. Although easy to make this work, it can be mildly tedious.

- Remove the circular join by breaking the join from W_GL_SEGMENT_D to W_HIERARCHY_D.

- Replace the old segment columns in the Presentation layer with the new segment columns from the new Segment Logical table

- Remove the segment column from the Hierarchy logical tables

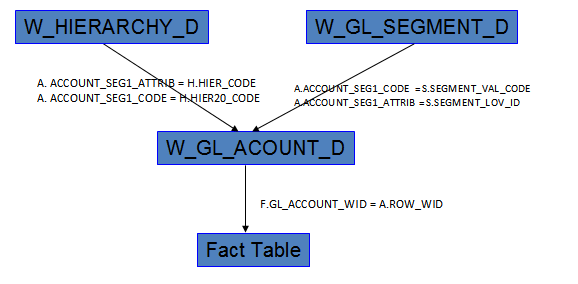

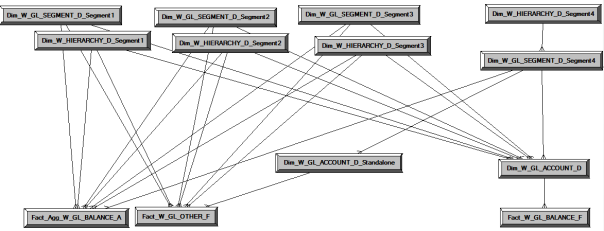

At the end of this involved re-wiring of the RPD, the join paths should look as in the diagram below. Note that Segment4 is a stand-in for all unused segments. Note the joins for the two GL Account D tables.

Thus:

1) This new model has Segment and Hierarchy completely separated and models them as different dimensions.

2) It maintains a link to unused segments in the same way as OOB, but using a different W_GL_ACCOUNT_D table.

3) The used segment & hierarchy tables are forever split, and as such they operate with other fact tables in a slightly different join path. Note they are still separate, but the work of going straight to the fact is not done.

4) The OOB GL Account table maintains the links for all other stars to ensure the model still works. Note that a lin

At this point, the main RPD modeling rework is completed. You should notice a nice performance boost right away. In looking at the Explain plans for the SQL, you can see how it is employing a good and complete Star Transformation. In fact, we’ve added more selectivity to the Fact table, which we know is a good thing for stars. Oh, don’t forget to regression test as much as you can at this point.

Partitioning

Partitioning of fact tables is pretty much something you should always do if you have even mild data sets. Financial Analysis tends to do lots of month (also known as Period) level analysis, along with some quarterly and yearly work. Partitioning by month makes perfect sense.

Although partitioning has benefits for administration, backup, even ETL, what we’re interested in is performance! Specifically, we’re interested in Partition Elimination. A partitioned table is really just a bunch of physical tables glued together logically. With a table partitioned by month, all data for a given month only goes into that one underlying table called a partition. If a query asks only for that one month, Oracle will only use that one partition and ignore all of the others. Hence, you can potentially ignore most of the data your table has. Queries that need more than one partition will include only those it needs.

In the newer version of the BI Apps 7.9.6+, Oracle has moved to a multi-calendar date table, W_MCAL_DAY_D. You will have to pick the appropriate date field in the fact table on which to base your partitioning. In some cases this may be difficult. For the GL Detailed Transactions Star which I focused on, it was quite easy: ACCT_PERIOD_END_DT_WID. Thus, partition your fact table on this field as follows:

PARTITION BY range (acct_period_end_dt_wid) ( partition P1 values less than (100120061231000), partition P2 values less than (100120070101000), … partition P61 values less than (100120111231000), partition P62 values less than (100120120131000) )

Oracle 11g introduces Interval partitioning to make this task a bit easier, so look into that if you have it.

Key Takeaways:

1) Partition fact tables in an effort to remove most of the raw data from your query.

Indexing

Next, I looked at indexing. Generally speaking, you want to have the following bitmapped indexes:

- On all FKs in your fact table. For us that means a) reviewing to make sure none are missing from OOB (there was one missing on DOC_STATUS_WID for my customer) and b) adding new bitmaps on the new fields added to the fact table. Note these should be local bitmaps, meaning they are also partitioned along with the underlying table. Thus a query that hits one partition only needs to look at a much smaller bitmap as opposed to a much larger one for the whole table (called global). Note for composite column FKs like our new segment code & attribute pairs, Oracle preferred single column bitmaps instead of a single composite bitmap of both code & attrib..

- On any fields used in the dimensions for filters. For Financial Analytics, this may mean:

- Any HIERx_code field in W_HIERARCHY_D. OOB only has a few of these, so this is a must.

- GL_ACCOUNT_NUM on W_GL_ACCOUNT_D. This is so common that I’m surprised it is not in OOB.

- Anything else such as ORG_NUM in W_INT_ORG_D.

The cataloging effort of the reports in scope will help determine which are needed:

Here I would build bitmapped indexes on Hier2_Code and Hier5_code (hierarchy_ID already exists).

At this point your performance may be good enough. For my customer, implementing these changes by themselves made massive improvements. Here is the summary of what we had achieved to this point without aggregates:

| 17 Reports: | Orig | No Aggs |

| Avg Report Time | 2:36s | 48s |

| # < 30 seconds | 4 | 13 |

| # < 10 seconds | 0 | 4 |

| # Meeting Customer Expectations | 1 | 14 |

The numbers above are slightly skewed unfortunately. I would love to be able to simply show you how impressive just doing the basic things above truly are, but unfortunately there are a few reports which skew the results due to the manner in which they were built (more on those towards the end). These problem reports need special attention.

Key Takeaway:

1) Index dimensional lookup fields with bitmap indexes

2) Index Fact FKs with local bitmap indexes

Aggregates

I like to say that aggregates are the last option when performance tuning for a good reason. Until you’ve done the things above, why both going through the effort of adding aggregate tables? You may not even need them. In other words, make sure your bases are covered with proper design and physical implementation before adding more work to your plate. Also recall that while Stars are good for selective (detailed) queries, they are not designed to be overly effective at summary queries.

I of course did not want to stop at only 4 reports being < 10 seconds, so I pushed on for even better numbers to completely surpass customer expectations. In any event, there is some very real benefit to be gained from Aggregates. Generally speaking, the rule of thumb for years has been to make aggregates that offer at least a 10:1 compression ratio. Unfortunately for us, the aggregates we built were not quite this good as far as the # of rows goes. But when you factor in column width into what you call ‘compression’, the numbers look much better:

| Aspect | W_GL_OTHERS_F | Agg Candidate #1 | Agg Candidate #2 |

| # Rows | 16M | 9M | 4M |

| Avg Row Length | 503 | 121 | 115 |

| Total Size | 8Gb | 1Gb | ~0.5 |

| Total Compression | N/A | 8:1 | 16:1 |

This is one of the very detailed downsides to anything generic – it is not tuned at all. It is impossible to do so and still be generic. The data models for the BI Apps contain an enormous number of fields in them, many of which are unused or populated with defaults (W_GL_OTHERS_F has about 125 fields!). They need to do this in order to support as many customers as possible. Unfortunately many of these fields do take up storage space, and as a result more I/O reads are needed to get a set of rows. When building our aggregates, we also threw out anything we didn’t need, resulting in a much narrower table, one where more rows can be crammed into a single I/O operation. Looking at the numbers above, although the rowset compression is not very good, the row length compression is about 4.5:1. When taken together, the resulting aggregates are very effective.

How to Determine Aggregates

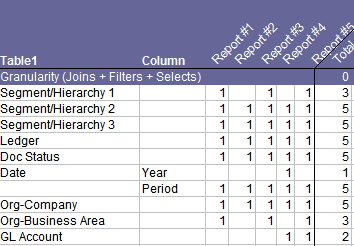

Although there is some art to it, there is a good deal of science as well. First and foremost, you have to understand the grains of your queries. (Protip: Know this right after requirements!) Going back to the beginning where I cataloged the grains of all of our queries a pattern emerged that drove the design of our two aggregates.

Examine the grains of the selects and the filters in these queries. If a table or known level is used by either, then identify it. Do this for each of the queries and a pattern emerges: (The actual analysis had 20 queries in it, but I’m showing only 5).

It appeared as if maybe we should have two different ones. The base one would have all of the dimensions shown above, and the higher one removed the GL Account dimension. For our sample, 2/3rd of the reports did not need the GL Account dimension. I went back and cross referenced the proposed aggregates to each report to determine if it would be used or not.

But did we need Agg1, Agg2, both, or none? The only way to be sure was to create the aggs, map them in and benchmark them. I recommend prototyping this as easily as you can with a CREATE TABLE AS SELECT statement. Although the lowest agg in our case may have been sufficient, the higher level agg impacted the performance of 2/3rds of our reports, and most of them in a meaningful way.

In the end, aggs are all about cost vs. benefit. If our higher agg#2 had only provided a nominal benefit (as measured by a small gain on a lot of reports or a larger gain on only a few reports), it would not have been worth the effort. Typical costs to assess include:

- More ETL and DAC coding

- More Data Model work

- More RPD work

- More QA

- Longer ETL Load times

- More objects to manage.

Ultimately, we elected to go with both as the benefit was there. Both aggregates will be added to the ETL load stream and integrated into the DAC for incremental processing. The RPD mapping is straightforward, and they directly link to the W_HIERARCHY_D and W_GL_SEGMENT_D tables just as with the base fact. Note: These tables were also partitioned by Month, on MCAL_PERIOD_WID.

Results

The results of the performance tuning effort were very good but not yet excellent:

| 17 Reports: | Orig | No Aggs | With Both Aggs |

| Avg Report Time | 2:36s | 48s | 31s |

| # < 30 seconds | 4 | 13 | 14 |

| # < 10 seconds | 0 | 4 | 10 |

| # Meeting Customer Expectations | 1 | 14 | 15 |

Back to the Reports

At this point we had met our performance objectives. However there were a few cases where the reports were still running slower than ideal. Going back to the reports, some of them had very complex logic in them. The reports showed the following problems:

- Hierarchy remapping via massive CASE statements. Some of these were monstrous, and resulted in queries totaling 11 pages of SQL! The obvious solution is to push these down into the ETL layer and provide the fields the users actually need to use. This will allow for much simpler SQL, less errors, greater consistency and better performance.

- Complex data logic. The subject area provided barely had any date fields in it to support what the users needed. Horribly painful logic persisted through many of the reports: CASE WHEN “Fiscal Period Num” between 1 and 3 Then 12 ELSE CASE WHEN “Fiscal Period Num” between 4 and 6 Then 3 ELSE …. Even after all of the changes above, this one report took 4 minutes to run. In looking at it in OEM, it was all CPU bound, churning through the complex logic. In some cases all that was needed was a “Fiscal Quarter End Date” and much of the complex logic can be eliminated. We will be sure to provide the tools the users need to get the job done optimally.

- Unnecessary Unions – some of the reports no longer needed their union queries. These are to be re-written, cutting the database load in half.

At this point we were forced to take on the problem reports one by one and see if there was a better way to build them. And in fact there was. There was still one report which was taking 4 minutes to run, and 2 were taking over 1 minute. These 3 were skewing our #’s wildly, and they simply had to be fixed!

Although we did not look at all three of the reports due to time, we did in fact look at the 4 minute report. The report author had been forced to build in a tremendous amount of manual logic to get the report to work. We were able to provide 2 new fields in the RPD which allowed us to re-write the report. We added a Quarter End Date to the Date dimension and a Dollar amount YTD to the metrics. With these in place, the report became exceedingly simple to build, and now it runs in 3 seconds.

Key Take-Aways:

1) Modeling and model tuning do have limits – they can’t do the impossible.

2) Make sure the report authors have the right tools to do the job. In our case, adding a more robust calendar table and a few time series metrics were all that was needed.

3) Once the tools are there, a report re-write can have enormous benefits.

Database

One thing that hasn’t been mentioned is database tuning. We did not have the ability to do this on our test database server as it was determined that DBA work should be done directly on Production. However it turns out the production database was already in good shape, and only one parameter was changed.

Key Take Away:

1) Database parameter tuning is not always the problem! Look at what your application is doing and how it is using the database first.

Summary

With addition time to rewrite the other reports, even those that were running in 12 seconds, plus pushing down complex logic into the ETL layer, I am confident that every report can run in less than 8 seconds and most likely in 5. We were getting decent result on reports with tremendously complex logic in them; simplify them and the results will only improve. And this is on a test database with lighter hardware power than production! Unfortunately the tuning project has ended before I could complete the final steps to really show you the end results.

| 17 Reports: | Orig | After Worst 4 Report Fixes | |

| Avg Report Time | 2:36s | 7s | |

| # < 30 seconds | 4 | 15/15 | |

| # < 10 seconds | 0 | 12/15 | |

| # Meeting Customer Expectations | 1 | 17 |

Again, there is still work to be done on these reports!

No comments:

Post a Comment